Resource Hub

The latest thinking from the world of compliance and regtech.

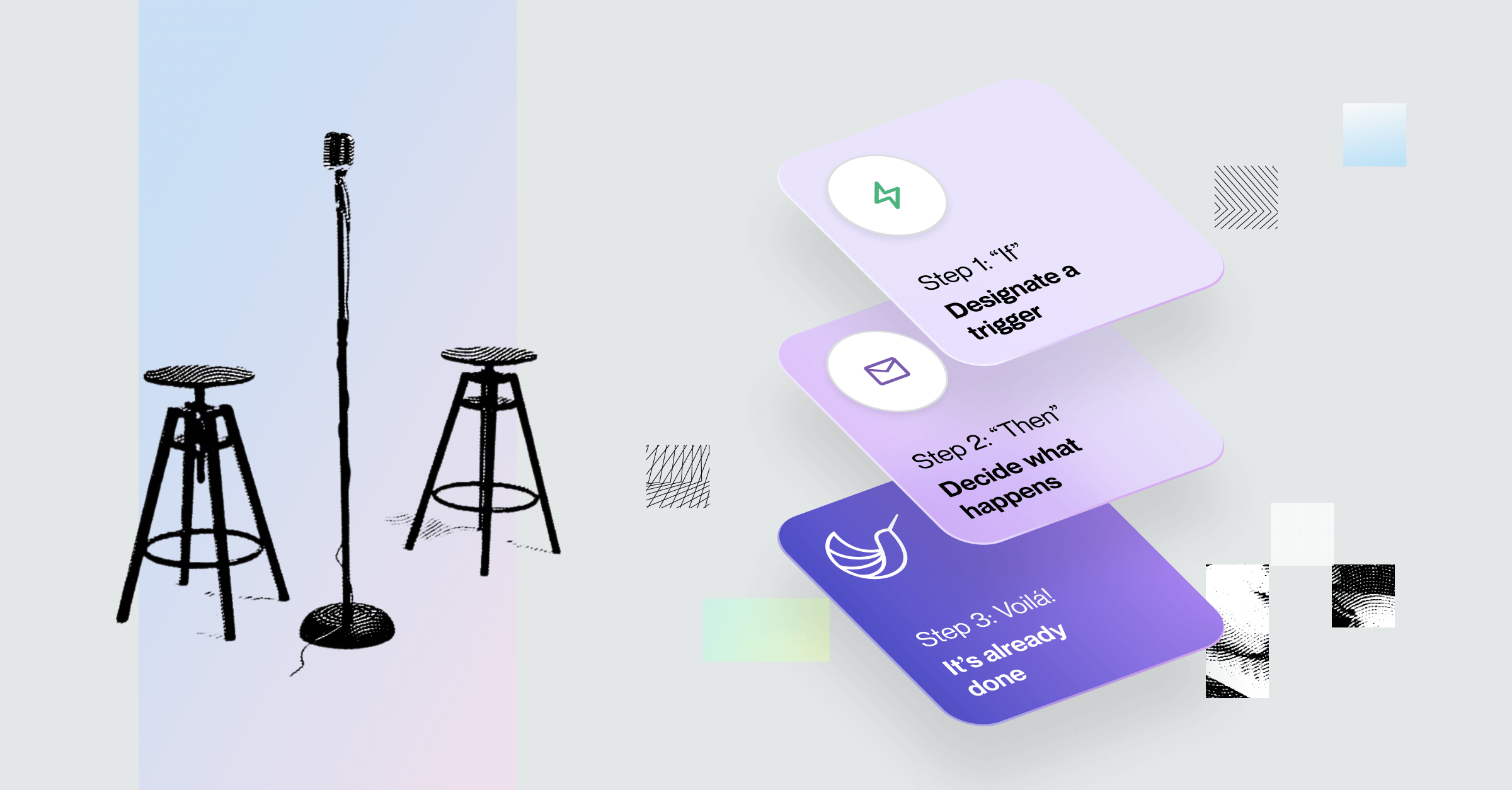

Spring ‘24 Product Update: Automations, 4 New Apps (Gmail, Outlook, Google Sheets, Excel), And More

Courtney Chuang

Head of Product Marketing

Filter

Category

Topic

Asset Type

Hummingbird Labs

Our work building compliance-grade AI to help fight financial crime.

Stay Connected

Subscribe to receive new content from Hummingbird

Downloads

Tools, guides, e-books, and other helpful compliance materials.

Stay Connected

Subscribe to receive new content from Hummingbird

Insights

Opinions and big-picture thinking about the future of compliance.

Stay Connected

Subscribe to receive new content from Hummingbird

Product

The latest releases and new features for Hummingbird's platform.

Stay Connected

Subscribe to receive new content from Hummingbird

Learning

Important compliance topics focused on key concepts and contextual relevance.

Stay Connected

Subscribe to receive new content from Hummingbird

%20to%20Use%20Each%20to%20Improve%20Compliance%20Operations.png)

_.png)

_.png)

_.png)